Data Ingestion: 7 Challenges and 4 Best Practices

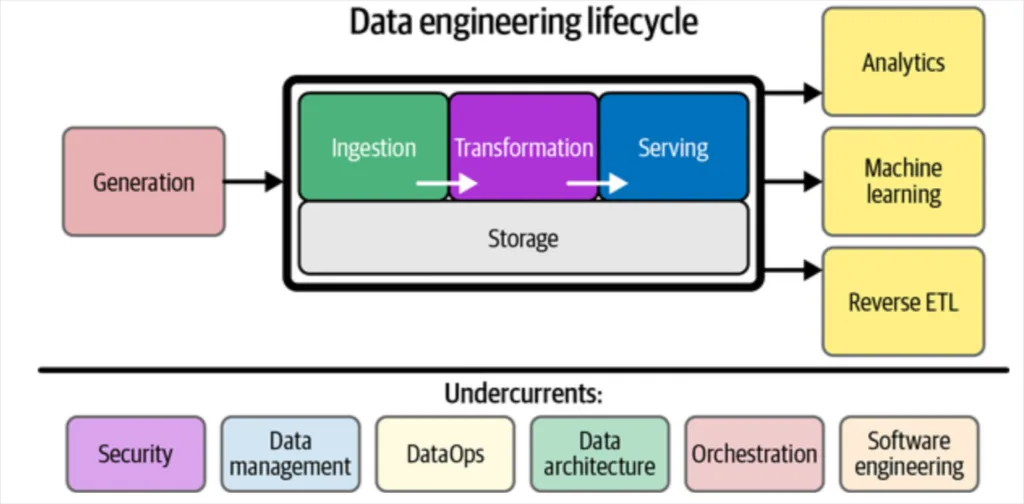

Data ingestion is the process of collecting data from various sources and moving it to your data warehouse or lake for processing and analysis. It is the first step in modern data management workflows.

While moving data from point A to point B sounds simple in theory, in practice data ingestion comes with its fair share of challenges that can impede the overall quality and usability of your data.

In this article, we’ll highlight four common data ingestion challenges and share best practices to help data orchestrators overcome them.

Table of Contents

- What is Data Ingestion?

- Types of Data Ingestion

- 7 Challenges to Data Ingestion

- Best Practices for Data Ingestion

- How data observability can complement your data ingestion

What is Data Ingestion?

Data ingestion is the process of acquiring and importing data for use, either immediately or in the future. Data can be ingested via either batch vs stream processing.

Data ingestion works by transferring data from a variety of sources into a single common destination, where data orchestrators can then analyze it.

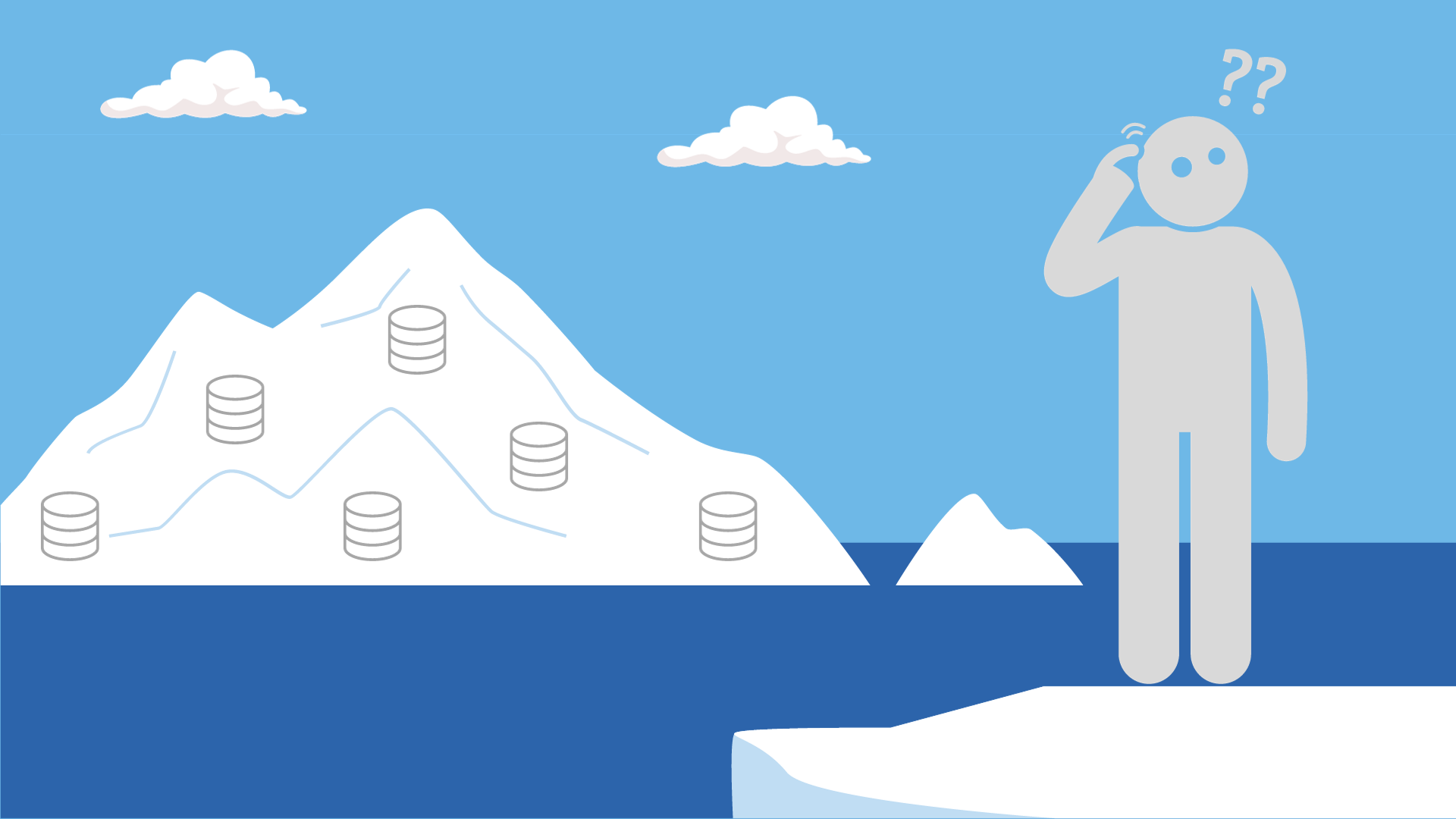

For many companies, the sources of data ingestion are voluminous. They include old-school spreadsheets, web scraping tools, IoT devices, proprietary apps, SaaS applications—the list goes on. Data from these sources are often ingested into a cloud-based data warehouse or data lake, where they can then be mined for information and insights.

Without ingesting data into a central data repository, organizations’ data would be trapped within silos. This would require data consumers to engage in the tedious process of having to log into each source system or SaaS platform to see just a tiny fraction of the bigger picture. Decision making would be slower and less accurate.

Data ingestion sounds straightforward, but in fact it’s quite complicated—and increasing volumes and varieties of data sources (aka big data) make collecting, compiling, and transforming data so it’s cohesive and usable a persistent challenge.

For example, APIs will often export data in JSON format and the ingestion pipeline will need to not only transport the data but apply light transformation to ensure it is in a table format that can be loaded into the data warehouse. Other common transformations done within the ingestion phase are data formatting and deduplication.

Data ingestion tools that automatically deploy connectors to collect and move data from source to target system without requiring Python coding skills can help relieve some of this pressure.

Types of Data Ingestion

There are two overarching types of data ingestion: streaming and batch. Each data ingestion framework fulfills a different need regarding the timeline required to ingest and activate incoming data.

Streaming

Streaming data ingestion is exactly what it sounds like: data ingestion that happens in real-time. This type of data ingestion leverages change data capture (CDC) to monitor transaction or redo logs on a constant basis, then move any changed data (e.g., a new transaction, an updated stock price, a power outage alert) to the destination data cloud without disrupting the database workload.

Streaming data ingestion is helpful for companies that deal with highly time-sensitive data. For example, financial services companies analyzing constantly-changing market information, or power grid companies that need to monitor and react to outages in real-time.

Streaming data ingestion tools:

- Apache Kafka, an open-source event streaming platform

- Amazon Kinesis, a streaming solution from AWS

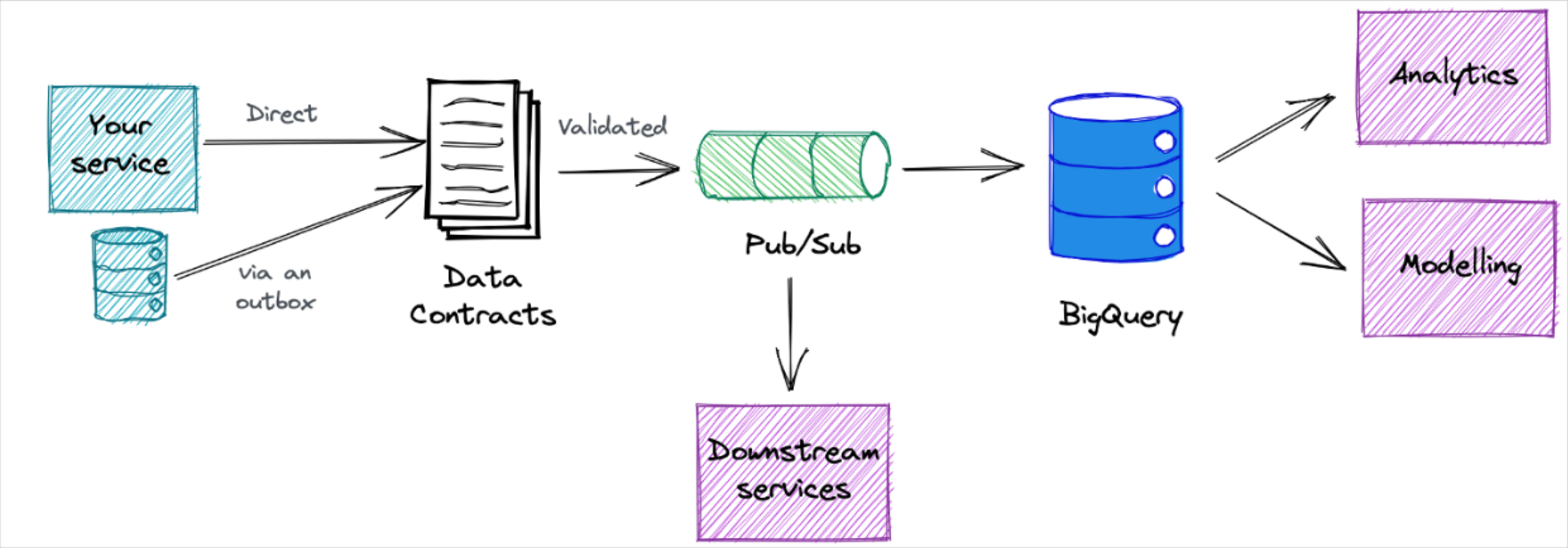

- Google Pub/Sub, a GCP service that ingests streaming data into BigQuery, data lakes, or operational databases

- Apache Spark, a unified analytics engine for large-scale data processing

- Upsolver, an ingestion tool built on Amazon Web Services to ingest data from streams, files and database sources in real time directly to data warehouses or data lakes

Batch

Batch data ingestion is the most commonly used type of data ingestion, and it’s used when companies don’t require immediate, real-time access to data insights. In this type of data ingestion, data moves in batches at regular intervals from source to destination.

Batch data ingestion is helpful for data users who create regular reports, like sales teams that share daily pipeline information with their CRO. For a use case like this, real-time data isn’t necessary, but reliable, regularly recurring data access is.

Some data teams will leverage micro-batch strategies for time sensitive use cases. These involve data pipelines that will ingest data every few hours or even minutes.

Also worth noting is lambda architecture-based data ingestion which is a hybrid model that combines features of both streaming and batch data ingestion. This method leverages three data layers: the batch layer, which offers an accurate, whole-picture view of a company’s data; the speed layer, which serves real-time insights more quickly but with slightly lower accuracy; and the serving layer, which combines outputs from both layers, so data orchestrators can view sensitive information more quickly while still maintaining access to a more accurate overarching batch layer.

Batch data ingestion tools:

- Fivetran, which manages data delivery from source to destination

- Singer, an open-source tool for moving data from a source to a destination

- Stitch, a cloud-based open-source platform that moves data from source to destination rapidly

- Airbyte, an open-source platform that easily allows data sync across applications

For a full list of modern data stack solutions across ingestion, orchestration, transformation, visualization, and data observability check out our article, “What Is A Data Platform And How To Build An Awesome One.”

7 Challenges to Data Ingestion

Data ingestion is a complex process, and comes with its fair share of obstacles. One of the biggest is that the source systems generating the data are often completely outside of the control of data engineers. Others include:

Time efficiency

When data engineers are tasked with ingesting data manually, they can face significant challenges in collecting, connecting, and analyzing that data in a centralized, streamlined way.

They need to write code that enables them to ingest data and create manual mappings for data extraction, cleaning, and loading. That not only causes frustration—it also shifts engineering focus away from critical, high-value tasks to repetitive, redundant ones.

This work can be easily automated for common data ingestion paths, for example connecting Salesforce to your data warehouse. However, connectors from data ingestion tools may not be available for more esoteric source systems. Or, the connector may not have a specific field for a particular source. In these cases, hard coding is often still required.

Schema changes and rise in data complexity

Since source systems are often outside of a data engineer’s control, they can be surprised by changes in the schema, or organization of the data. Even small changes in data type or the addition of a column can have a negative impact across the data pipeline.

Either the ingestion won’t happen or some automated ingestion tools such as Fivetran will create new tables for the updated schema. While in this case the data does get ingested, this can impact transformation models and other dependencies across the pipeline.

Simply put, data and pipelines are becoming more complex. Data use is no longer the remit of a single data team; rather, data use cases are exploding across the business, and multiple stakeholders across multiple departments are generating and analyzing data from highly variable sources. These sources themselves are constantly changing, and it’s difficult for data engineers to stay abreast of the latest evolution of data inputs.

When data is that diverse and complex, it can be challenging to extract value from it. The prevalence of anomalous data that requires cleaning, converting, and centralizing before it can be used presents a significant data ingestion challenge.

Changing ETL schedules

One organization saw their customer acquisition machine learning model suffered significant drift due to data pipeline reliability issues. Facebook changed how they delivered their data to every 12 hours instead of every 24.

Their team’s ETLs were set to pick up data only once per day, so this meant that suddenly half of the campaign data that was being sent wasn’t getting processed or passed downstream, skewing their new user metrics away from “paid” and towards “organic.”

Parallel architectures

Streaming and batch processing often require different data pipeline architectures. This can add complexity and require additional resources to manage well.

Job failures and data loss

Not only can ingestion pipelines fail, but orchestrators such as Airflow that schedule or trigger complex multi-step jobs can fail as well. This can lead to stale data or even data loss.

When the senior director of data at Freshly, Vitaly Lilich, evaluated ingestion solutions he paid particular attention to how each performed with respect to data loss. “Fivetran didn’t have any issues with that whereas with other vendors we did experience some records that would have been lost–maybe 10 to 20 a day,” he said.

Duplicate Data

Duplicate data is a frequent challenge that arises during the ingestion process. This can be from jobs re-running as a result of either human or system error.

Compliance Requirements

Data is one of a company’s most valuable assets—and it needs to be treated with the utmost care, particularly when that data is sensitive or personally-identifying information about customers. When data moves across various stages and through various points within the ingestion process, it risks noncompliant usage—and improper data ingestion can lead at best to infringement of customer trust and at worst regulatory fines.

Best Practices for Data Ingestion

While data ingestion comes with multiple challenges, these best practices in a company’s approach to data ingestion can help reduce obstacles and enable teams to confidently ingest, analyze, and leverage their data with confidence.

Automated Data Ingestion

Automated data ingestion solves many of the challenges posed by manual data ingestion efforts. Automated data ingestion acknowledges both the inevitability and the difficulty of transforming raw data into a usable form, especially when that raw data derives from multiple disparate sources at large volumes.

Automated data ingestion leverages data ingestion tools that automate recurring processes throughout the data ingestion process. These tools use event-based triggers to automate repeatable tasks, which saves time for data orchestrators while reducing human error.

Automated data ingestion has multiple benefits: it expedites the time needed for data ingestion while adding an additional layer of quality control. Because it frees up valuable resources on the data engineering team, it makes data ingestion efforts more scalable across the organization. Finally, by reducing manual processes, it increases data processing time, which helps end users get the insights they need to make data-driven decisions more quickly.

Create data SLAs

One of the best ways to determine your ingestion approach (streaming vs batch) is to gather the use case requirements from your data consumers and work backwards. Data SLAs cover:

- What is the business need?

- What are the expectations for the data?

- When does the data need to meet expectations?

- Who is affected?

- How will we know when the SLA is met and what should the response be if it is violated?

Decouple your operational and analytical databases

Some organizations, especially those early in their data journey, will directly integrate their BI or analytics database with an operational database like Postgres. Decoupling these systems helps issues in one cascading into the other.

Data quality checks at ingest

Checking for data quality at ingest is a double edged sword. Creating these tests for every possible instance of bad data across every pipeline is not scalable. However, this process can be beneficial in situations where thresholds are clear and the pipelines are fueling critical processes.

Some organizations will create data circuit breakers that will stop the data ingestion process if the data doesn’t pass specific data quality checks. There are trade-offs. Set your data quality checks too stringent and you impede data access. Set them too permissive and bad data can enter and wreak havoc on your data warehouse. Dropbox explains how they faced this dilemma in attempting to scale their data quality checks.

The solution to this challenge? Be selective with how you deploy circuit breakers and leverage data observability to help you quickly detect and resolve any data incidents that arise before they become problematic.

How data observability can complement your data ingestion

Testing critical data sets and checking data quality at ingest is a critical first step in ensuring high-quality, reliable data that’s as helpful and trusted as possible to end users. It’s just that, though—a first step. What if you’re trying to scale beyond your known issues? How can you ensure the reliability of your data when you’re unsure where problems may lie?

Data observability solutions like Monte Carlo can help data teams by providing visibility into failures at the ingestion, orchestration, and transformation levels. End-to-end lineage helps trace issues to the root cause and understand the blast radius at the consumer (BI) level.

Data observability provides coverage across the modern data stack—at ingest in the warehouse, across the orchestration layer, and ultimately at the business intelligence stage—to ensure the reliability of data at each stage of the pipeline.

Data ingestion paired with data observability is the combination that can truly activate a company’s data into a game-changer for the business.

Interested in learning more about how data observability can complement your data ingestion? Reach out to Monte Carlo today.

Our promise: we will show you the product.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage