Monte Carlo Data Observability Insights Now Available in the Snowflake Data Marketplace

Is your data quality improving? What is your most used data? Where in the pipeline are your most frequent data issues occurring?

With Snowflake Secure Data Sharing, building custom workflows and dashboards to answer these questions has never been easier.

I am excited to announce Monte Carlo Data Observability Insights, end-to-end operational analytics of an organization’s data platform, is now available in the Snowflake Data Marketplace.

Previously, Monte Carlo customers accessed their Insights data by exporting CSV files directly from the Monte Carlo platform. With Snowflake Secure Data Sharing, customers can now consume their data as tables in their Snowflake environment to build custom workflows and dashboards.

Monte Carlo reduces data downtime with end-to-end observability and automatic alerts for data quality issues related to freshness, volume, distribution, schema, and lineage. Insights reports leverage machine learning to measure the reliability, performance, costs and effectiveness of organizations’ data initiatives across these key indicators of data health.

Insights reports can answer questions such as:

- Which tables are most widely and frequently used and by who?

- Which tables should we test?

- Which tables can be deprecated to lack of use or relevance?

- How is the number of incidents and resulting downtime trending over time?

- Which teams are following data quality best practices?

- Are we meeting our data SLAs?

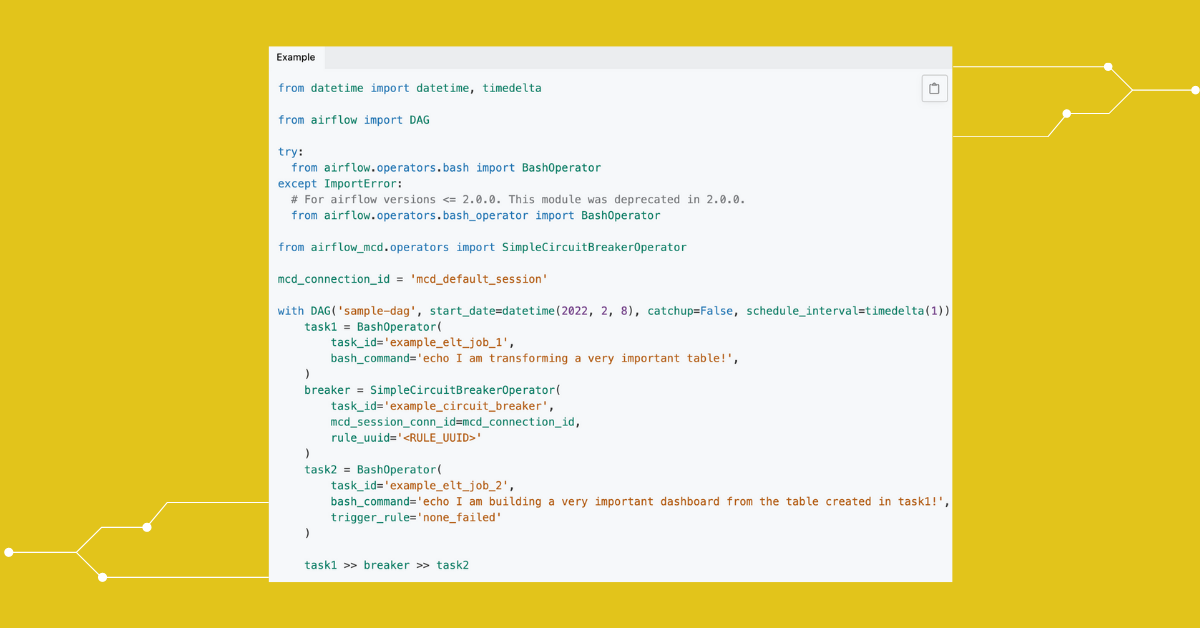

Out-of-the-box Insights reports, now available to be consumed within Snowflake, include:

- Key Assets: Identify important Snowflake tables based on usage, access and data quality checks.

- Coverage Overview: Measure the level of monitor coverage across critical schemas and tables in your Snowflake environments.

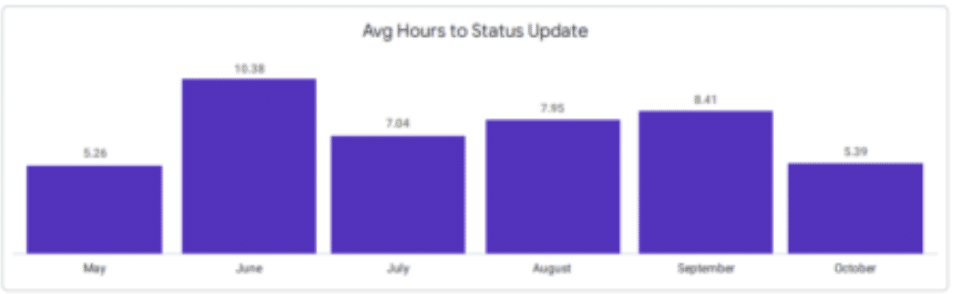

- Events: Calculate incident response rate and time to first response. See trends across data quality anomalies and schema changes across your Snowflake pipelines.

- Table Read/Write Activity: Gauge the importance of tables based on their read/write activity in Snowflake.

- Incident Query Changes: Determine if an incident was caused by a new or updated Snowflake query within the last two weeks.

- Deteriorating Queries: Prevent data downtime and reduce infrastructure costs by identifying queries at risk of timing out, not scaling well or increasing compute time.

- Rule and SLI Results: Fine-tune and track progress towards SLAs and SLOs in Snowflake to keep .

- Dimension Tracking Suggestions: Automatically identify the 500 most-used fields in your Snowflake environment and apply dimension tracking monitors to catch issues with critical data sets.

- Field Health Suggestions: Automatically identify the 100 most-used tables in your Snowflake environment to monitor for field health changes at each stage in the pipeline.

ShopRunner is one Monte Carlo – Snowflake customer that is excited to benefit from secure data sharing.

The members-only e-commerce platform integrated their Snowflake and Looker data stack with Monte Carlo’s Data Observability Platform to gain unprecedented visibility into their data ecosystem through end-to-end lineage and ensure data is accurate and up-to-date at each stage of its life cycle.

“[Insights is] a new and powerful way to understand what data assets matter most to our business and how we can better drive an impact with our data across the organization,” said Valerie Rogoff, Director of Data Analytics Architecture at ShopRunner.

With the raw data available in Snowflake, customers can also customize their reporting and even combine it with data from other solutions.

Financial network tastytrade is accessing Monte Carlo’s observability insights directly in Snowflakeas part of their efforts to scale and consolidate multiple Looker dashboards within a centralized “command center” to power analytics workloads across the company.

“With Monte Carlo and Snowflake Data sharing, I can create dashboards on utilization, costs, and all my data operational analytics in a central place I check every day,” said Alex Welch, VP of Enterprise Data, Analytics, at tastytrade. “It’s yet another way data observability has saved our team time while maintaining the highest standards for data quality in Snowflake.”

If you are interested in learning how you can enable Snowflake Data Sharing and obtain end-to-end visibility into operational analytics across your data stack, contact your Monte Carlo customer success representative, check our our docs, or email support@montecarlodata.com.

Want to get started with data observability for Snowflake? Book a time to speak with us in the form below.

Our promise: we will show you the product.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage