How Monte Carlo and Snowflake Gave Vimeo a “Get Out Of Jail Free” Card For Data Fire Drills

This article is sourced based on the interview between Lior Solomon, (now the former) VP of Engineering, Data, at Vimeo with the co-founders of Firebolt on their Data Engineering Show podcast which took place August 18, 2021. Watch the full episode.

Vimeo is a leading video hosting, sharing, and services platform provider. The 1,000+ company helps small, medium and enterprise businesses scale with the impact of video.

Vimeo’s data team operates on a massive scale of more than 85 billion events per month. It is important for the team to push data as near real-time as possible and ensure its reliability. Not only does the data inform critical internal decision making, but it is also public facing via key features such as machine learning powered video recommendations and a key part of their value proposition to their enterprise customers.

Vimeo employs more than 35 data engineers across data platform, video analytics, enterprise analytics, BI, and DataOps teams. They operate one of the most sophisticated and robust data platforms in media.

“We have a couple of data warehouses with about a petabyte in Snowflake, 1.5 petabytes in BigQuery, and about half a petabyte in Apache HBase,” said Lior Solomon, former VP of Engineering, Data, at Vimeo.

In 2021, Vimeo moved from a process involving big complicated ETL pipelines and data warehouse transformations to one focused on data consumer defined schemas and managed self-service analytics.

“The previous set up involved pushing whatever unstructured data you wanted into a homebrew pipeline, and unsurprisingly after 16 years, you started to see thousands of lines of code from all the massaging of the data on the ETLs by the BI team that extract the data, push into Kafka and drop into Snowflake where there is then on top of that ETLs that make sense out of it,” said Lior. “There were a couple of challenges because it’s easy to break this type of pipeline and an analyst would work for quite a while to find the data he’s looking for.”

The new process is focused on analytics efficiency. It involves a contract with the client sending the data, schema registry, and pipeline owners responsible for fixing any issues. Vimeo’s DataOps team helps analytics teams in the self-service process to keep an eye on the big picture, leverage previous work, and prevent duplication.

Challenge: Building Trust with the Business

On the heels of this organizational shift, Lior started prioritizing building data trust and availability across the entire organization.

“We’re actually expanding our machine learning teams and going more in that direction. It would be hard to advocate for hiring more and taking on more risk for the business without creating that sense of trust in data,” said Lior. “We have spent a lot of the last year on creating data SLAs or SLOs making sure teams have a clear expectation of the business and what’s the time to respond to any data outage.”

Before turning to Monte Carlo for end-to-end data observability, Vimeo first attempted to build an in-house solution to detect and resolve data issues.

“We had a schema registry with a fancy framework that knows if the data is right or wrong,” said Lior. “But what it couldn’t tell you is if there were any anomalies in the data.”

Vimeo also leveraged Great Expectations to test their ETLs, and while it was a piece of the puzzle, the team couldn’t reach the scale they required. Anodot, which they use for more granular anomaly detection use cases for their data science team, also did not meet their requirements for scale.

“We use Great Expectations, which is great, but it takes time to implement for the whole pipeline and all the ETLs you have to protect. And unfortunately, usually you prioritize your efforts by how many issues you’ve had or who is shouting at you more,” said Lior. “I think Anodot is good for specific anomaly detection of specific business logic.”

Solution: Data Observability with Monte Carlo

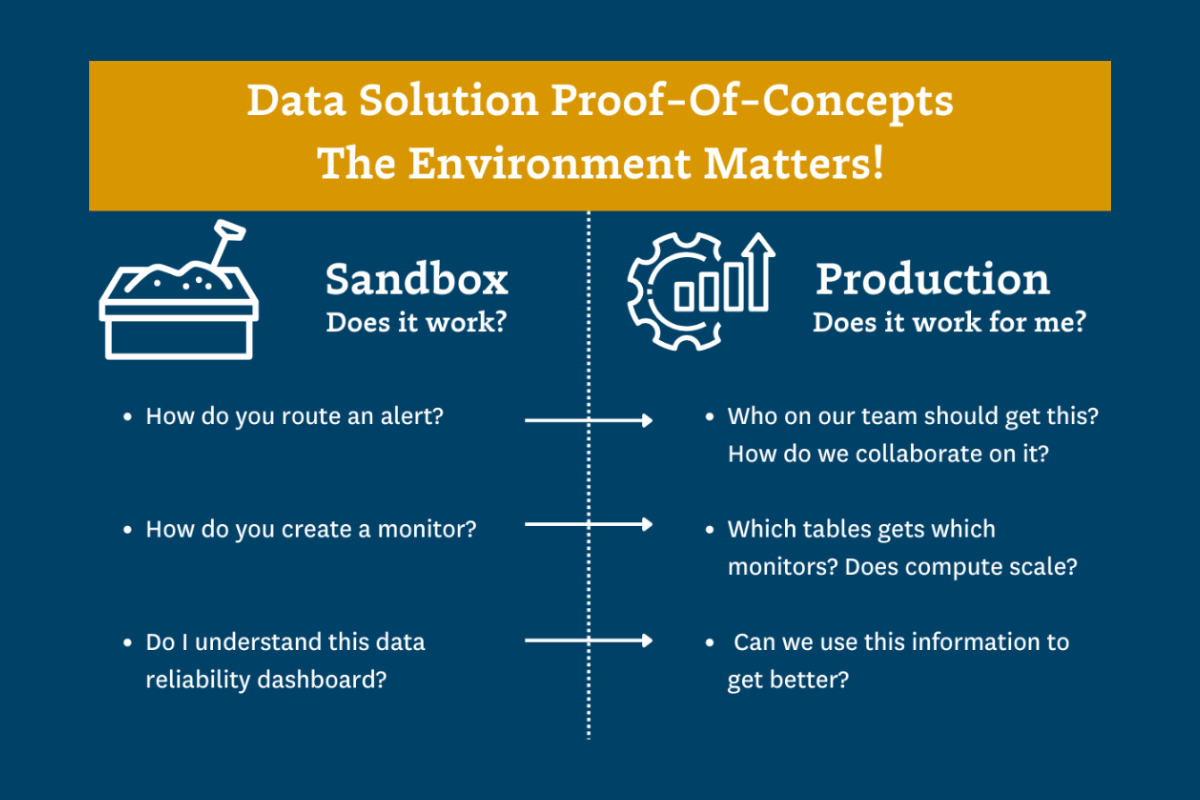

Vimeo then evaluated Monte Carlo to help with its data trust and validation objectives. The combination of Snowflake, Looker and Monte Carlo was a perfect match to generate immediate results.

“I felt like we literally jumped to the future,” said Lior. “What Monte Carlo did, which was amazing in my mind, was they hooked up to Snowflake and to Looker, and without me setting up anything, they started listening and building anomaly detection to all the tables and datasets we have.”

While the scale of insights and alerts initially seemed daunting, Lior rolled out the solution gradually to help create relationships and jumpstart evidence-based conversations on data quality expectations.

“My team’s first reaction was that they would have to respond to alerts all day, but we were able to avoid alert fatigue by focusing on a specific schema on a specific data set, and turn on the alerts there,” said Lior. “You cannot debug everything yourself, sometimes, you also need to go to the engineering team and talk to them about what’s broken.”

Monte Carlo’s ability to group related alerts and send them to dedicated Slack channels helped form an incident response program and provided Lior with increased visibility.

“We started building these relationships where I know who’s the team driving the data set,” said Lior. “I can set up these Slack channels where the alerts go and make sure the stakeholders are also on that channel and the publishers are on that channel and we have a whole kumbaya to understand if a problem should be investigated.”

The Result: A Culture of Data Accountability

With end-to-end data observability across the entire data stack, Vimeo achieved the scale they needed and gained unrivaled insight into their data quality with the help of Snowflake and Monte Carlo.

“Suddenly I’m starting to become aware of problems I wasn’t aware of at all,” said Lior. “ For example, I had no idea that a specific data set would have an issue once a week where it would not be refreshed for two days.”

Not only did Monte Carlo help Lior instill data trust across the organization, it also formed a culture of accountability and shared responsibility.

“You build a relationship with teams that are more data driven. Some of them get excited about solving these things,” said Lior. “One of the things that helps us is we’re getting fancy reports which for me is a get out of jail free card. Now, whenever someone comes to me and says ‘hey the data is bad,’ I can find out where the data is bad and say ‘well you all haven’t been responsive to alerts for the past month, obviously its bad.’ “

“It starts the conversation at the point where you’re setting up the expectations and escalate with your stakeholders and [Monte Carlo] helps facilitate those discussions,” he said.

Looking back on the build versus buy question, Lior is happy with the decision they made.

“I am happy we didn’t try to build this up ourselves.”

Interested in learning more about data observability and Monte Carlo? Book a time to speak with us in the form below.

Our promise: we will show you the product.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage