How Fox Facilitates Data Trust with Governance and Monte Carlo

The Super Bowl. American Idol. The Simpsons. What do all of these programs have in common?

They run on data powered by Fox.

Building indelible entertainment experiences like these is no walk in the park. Factor in the advertising strategies, media production, partner programming, audience analytics…and you’re looking at an ocean of data that would fill even the deepest trench (we’d like a television show about that too, please!).

So how does Fox’s data strategy support these complex data workflows? And with so many data teams across functions, how does Fox approach data governance?

At IMPACT 2023, Barr Moses, CEO & Co-founder of Monte Carlo sat down with Oliver Gomes, VP of Business Intelligence, Analytics, and Strategy at Fox to discuss the foundations upholding Fox’s data strategy, what he envisions for a future full of more data than ever–and his takes on the generative AI models that will be able to handle it.

Table of Contents

Solve data silos starting at the people-level

Within such a large data organization, data quality has to be top priority. But, when a business has scaled to the level of Fox, it’s common for data silos to occur, especially as pipelines become more complex.

Data and information silos are extremely common across data organizations – especially those of such a large scale. Data engineers typically have a deep understanding of the shape and model of the underlying data, but their knowledge of the data’s life cycle typically ends there. The broader semantic context on how the data is being used tends to remain with members of the data organization further downstream.

For Oliver, solving those siloes starts at the people level.

One of the most important tenets of his data strategy is ensuring that the data team understands the data’s–and thus, their own–impact at the same level of complexity as the consumers downstream, like the BI teams, data scientists and analysts, and customer-facing business stakeholders.

“It’s really easy for data engineers to not know how the data they’re handling is being used,” says Oliver. “But a lot of data stewards want to be brought into the knowledge they’re missing.”

But, for Oliver, it’s essential to not only ensure data engineers understand the impact of the data, but that data consumers understand where their data came from and how the data quality is being managed.

“You need to bring the entire business into the core of what you’re building and why,” says Oliver. “What’s the end state? You need to get the data teams bought into the end value and how it helps them.”

When the broader data organization has a deeper understanding of how the data is impacting the business, everyone feels more connected to the “why” behind the queries and ad-hoc requests. As Fox has continued to scale their data operations, connecting the data teams has also meant setting universal standards to ensure their data is governed across teams as well.

Keep data governance approachable

Like filing those pesky expense reports, data governance can feel like a necessary evil. For Fox, data governance is a relatively new practice–but it’s one that’s made a huge impact.

“A year ago, data governance did not exist at Fox,” says Oliver. “People said they governed their data in many ways, but there was no universal understanding of what data governance meant.”

Developing guiding principles for data governance across Fox was Gomes’ first step when it came to building a data platform. But, because it was a new change, it wasn’t exactly well-received within the data organization.

“Change isn’t simple,” says Gomes. “Even if they know it’s right, it’s tough to get people to understand why it’s worth it to change their routines. People are used to writing a query, and then you suddenly tell them you’re going to write another query on top of it? That’s a new change. You have to manage those expectations and emphasize that it’s for the greater good.”

And, with the right processes and tools in place—like data observability—data governance doesn’t have to be a major lift for the data team. “We want to show teams that data governance is actually a lighter weight than they think. It doesn’t need to be a formal council.”

Oliver Gomes’ data governance best practices

Gomes shares his 3 key best practices for optimizing Fox’s data governance model:

- Create a lean team

- Create principles

- Govern at the edges

Data governance principles should be established, but that doesn’t mean those principles should stifle production or workflows. In fact, Gomes encourages the opposite.

“Build what you want. When we move it to production, we’ll put it up against our principles. From there, engineers can then understand what’s not compliant with the data governance model, and they can fix it. Going forward, they’ll be able to build with governance in mind.”

“Data governance shouldn’t mean that someone is standing over your shoulder all the time,” says Gomes.

Manage and promote the value of high-quality data

Data needs to be secure, it needs to be private, but it also needs to be reliable. And internal communications about the quality of the data is key to an effective data governance rollout.

From issues in the data to breaks in code, there are a million ways the data can go bad. To facilitate visibility and empower his team to manage data quality issues more effectively, Gomes turned to Monte Carlo. With Monte Carlo’s data observability platform, Gomes’ team is automatically alerted as soon as an anomaly is detected, empowering them to respond more efficiently to keep data reliable and compliant.

But visibility isn’t just for the data team. Once Gomes had a programmatic solution for data quality, Gomes focused on managing perceptions downstream to consumers by evangelizing the value of the rich high-quality data flowing into their critical data products.

“You need to promote that data quality to the business,” says Gomes. “The data is fresh, it’s timely, it’s been quality checked. But we want those business users to do more than just believe us. We want them involved in data governance too.”

And with targeted Monte Carlo alerts for stakeholders in Slack and Teams, stakeholders always know exactly when a dataset is—and is not—safe to use, developing trust in the data team along the way.

“We want them to see the alerts and know that the data they’re using has been governed,” says Oliver.

Finally, a self-serve model, enabled stakeholders to not only feel confident in the quality of their data, but to act on it faster.

How will Generative AI impact data quality at Fox?

Data quality is top priority for Fox. So, how does Oliver see this high-quality data feeding into the generative AI craze?

“GenAI is not all hype,” says Gomes. “But its value has not been realized yet.”

Fox, like many enterprise companies, are interested in understanding how they can leverage generative AI to improve their business functions, especially when it comes to simplifying tedious, but essential tasks.

“The immediate impact I see is the increase in operational efficiency,” says Oliver. “For example, it used to take a very long time to update the metadata in the data catalog. Now that’s something generative AI can do. It enables users to interact with the data faster.”

Fox is one of many companies interested in leveraging generative AI, and there are many use cases that can drive efficiencies and business value.

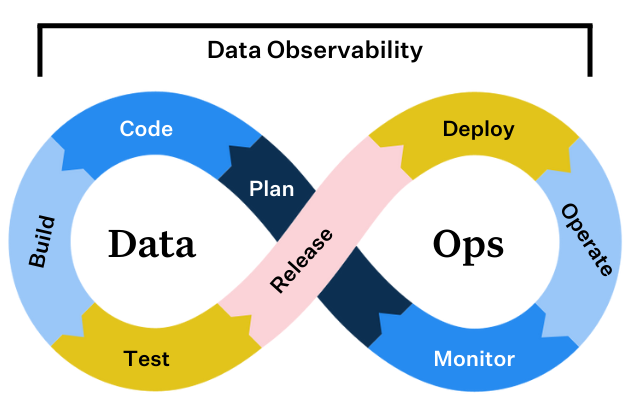

You can’t have data governance without data observability

Data governance is all about standards. And standards create the framework for data trust. “[Data governance] creates a world that’s a little more templatized and organized,” says Oliver.

But standards are only as good as your ability to keep them. And maintaining standards is what data observability is all about.

Effective data governance can’t exist without high quality data. By leveraging data observability as a key component of a data governance model, data teams are empowered with the tools to maintain quality standards at scale, understand where they’re falling short, and root-cause quickly to resolve issues faster.

To learn more about how data observability fits into your data governance model, talk to our team.

Our promise: we will show you the product.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage