Mastering Data Migrations: A Comprehensive Guide

Far from a mere “copy-paste” job, data migrations can be deceptively tricky and one of the most challenging aspects of a data engineer’s job.

But as businesses pivot and technologies advance, data migrations are—regrettably—unavoidable.

Much like a chess grandmaster contemplating his next play, data migrations are a strategic move. A good data storage migration ensures data integrity, platform compatibility, and future relevance.

In this article, we’ll discuss the intricacies of data migrations, highlight the potential pitfalls and complexities—particularly when things go wrong—and how they can be effectively managed to make your data migration a success.

Table of Contents

What makes data migrations complex?

A data migration is the process where old datasets, perhaps resting in outdated systems, are transferred to newer, more efficient ones.

Sure, you’re moving data from point A to point B, but the reality is far more nuanced. Beneath the surface lies intricate processes involving data validation, transformation, and ensuring compatibility with new systems.

You have to ensure that data remains intact and consistent during the migration process.

Different systems have different data structures or schemas. Migrating data often means transforming it to fit the new schema. It’s like trying to fit a square peg in a round hole. Adjustments and transformations are crucial.

At the same time, businesses can’t afford long downtimes. Data migrations need to be swift to ensure minimal disruption. It’s a race against time, balancing speed with accuracy.

Then there’s the unforeseen challenges. Stuff like software incompatibilities or unexpected data anomalies.

And the larger your datasets, the more meticulous planning you have to do. Data migrations demand a deep understanding of both the old and new systems, and a readiness to tackle unexpected hurdles.

It’s not just about moving items; it’s about ensuring everything fits perfectly in the new space.

Pre-migration preparations

Begin with a thorough examination of your current system. Understand its structure, the types of data it holds, and any existing issues or anomalies. Understand the differences and similarities between the source system and the target system.

Set clear objectives. Determine what data needs to be migrated. Is it the entire database or just specific segments? What does a successful data migration look like for your organization? Is it zero downtime, data integrity, or perhaps both?

Engage with all relevant stakeholders, from IT teams to end-users. Their insights can be invaluable in identifying potential challenges and solutions. Work with them to develop a detailed migration plan, outlining each step, responsible parties, and timelines.

Before initiating any migration, ensure you have a comprehensive backup of all data. This acts as a safety net in case of unforeseen issues. Design a strategy to revert changes if the migration encounters critical issues. This ensures business continuity and data safety.

Ensure you have the necessary hardware and software resources to facilitate the migration. Popular migration tools are discussed below.

Set up a testing environment that mirrors the production system. This allows for realistic testing without affecting live data. Conduct mock data migrations in the testing environment to identify potential issues and refine the migration process.

Have a communications plan to keep all stakeholders informed about the migration’s progress, potential downtimes, and any changes that might affect them. Establish a system for stakeholders to report issues or provide feedback during and after the migration.

Remember, pre-migration preparations are the foundation upon which successful data migrations are built.

How to choose the right migration tools and frameworks

The success of a data migration often hinges on the capabilities of the chosen tools and frameworks. But with a plethora of options available, how do you make the right choice? Let’s delve into the art of selecting the perfect toolkit for your migration.

First, what are your needs? Are you conducting a one-time migration or will there be recurring transfers? Different scenarios demand different tools. The intricacy of your data—its volume, variety, and velocity—can dictate the kind of tools you’ll need.

Popular categories of migration tools include:

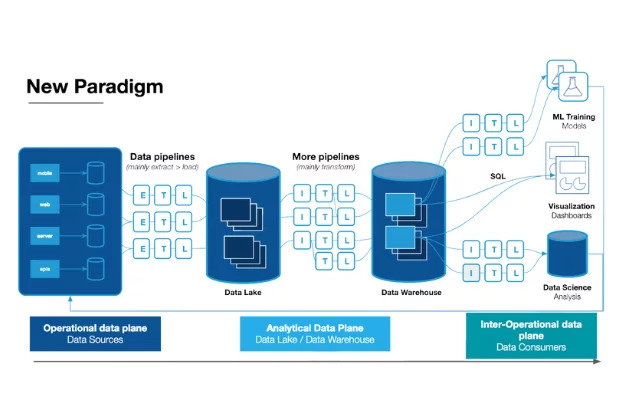

- Database Management Systems (DBMS): Tools like MySQL Workbench or Microsoft SQL Server Management Studio offer built-in migration assistants.

- ETL Tools: Extract, Transform, Load (ETL) tools such as Talend or Apache NiFi are designed for complex data integrations and migrations.

- Cloud Services: Platforms like AWS Database Migration Service or Google Cloud’s BigQuery Data Transfer Service provide cloud-based migration solutions.

While tools aid in directly “doing” a task for you, a framework provides a structured “way” to do it yourself. They lay down the methodology for the entire migration process, providing a skeleton or blueprint that you build upon. Frameworks can help you do things like automatically generate migration files, store the migration references in the database, and allow for easy rollbacks.

Popular categories of migration frameworks include:

- ORM-Based Frameworks:

- Django Migrations (Python): Offers a robust migration system, allowing for automated migration files and easy rollbacks.

- ActiveRecord (Ruby on Rails): Allows developers to write migrations as Ruby code, abstracting away the SQL complexities.

- Hibernate (Java): An ORM framework that provides tools for database-independent application development.

- Version Control for Database:

- Database Refactoring Frameworks:

- DBmaestro: Emphasizes automation and offers a database release automation solution.

- Redgate’s SQL Change Automation: Integrates with SQL Server Management Studio for automated migrations.

- Migration Frameworks for NoSQL:

Last but not least, no matter what type of migration you’re doing, you need a vigilant overseer to ensure every step is executed flawlessly. That’s where data observability platforms like Monte Carlo come in. Acting as your migration’s safety net, Monte Carlo offers real-time anomaly detection, the ability to visualize data lineage, and its trustworthiness metrics and collaborative features ensure that, post-migration, your data remains reliable and decision-ready. Learn more in our What is Data Observability? post.

The multi-step migration process

Now that you’ve done your pre-migration prep and have your tools handy, it’s off to the races.

Each step below is crucial, building on the previous one, ensuring that by journey’s end, your data is safely and accurately relocated.

🖐️ Wait, you did do your pre-migration prep, right?

- Initial assessment and planning

- Data backup

- Schema mapping

- Engage stakeholders

- Dry run in a testing environment

- Communications plan

Scroll back up to pre-migration preparations for all the details if needed.

🏇Now with everything set, it’s time for the main event

- Transfer data from the source to the target system: extract the data, transform it to fit the schema of the target system, and load the data.

- Handle errors: there might be conflicts, such as duplicate keys or constraint violations, that need to be resolved, either manually or through predefined rules.

- Have a catch-up mechanism: if the source system remains in use during the migration, any new data or changes made post-extraction need to be synchronized with the target system.

- Data validation and verification: after loading, perform initial checks to ensure data integrity. Also validate the data against specific business rules to ensure it meets operational requirements.

🥂 Celebrate, but continue to monitor

- Look for any performance issues, anomalies, or inconsistencies.

- Gather feedback to understand any challenges faced by end-users and address them promptly.

- Document every step of the migration process, noting challenges faced, solutions applied, and lessons learned. This documentation serves as a valuable resource for future migrations.

How to handle challenges and rollbacks

Despite meticulous planning, even the best of us run into challenges. Hopefully yours are minor hiccups rather than major roadblocks, but being prepared to handle these challenges – and, if necessary, execute a rollback – can make all the difference.

First, if there’s a bump in the data migration journey, you need to feel it. That’s why data observability tools like Monte Carlo are so important to keep an eye on the migration process as it unfolds. This ensures that any errors or anomalies are logged with detailed information, making it easier to diagnose and address the root cause.

Common migration challenges include data loss, data corruption, performance issues, or dependency failures.

In cases where only specific segments of the migration fail, consider rolling back only the affected segments instead of the entire migration. If shit really hits the fan, restore the full system to its pre-migration state and do a root cause analysis to prevent recurrence.

Keep stakeholders informed about any challenges and the steps being taken to address them. Transparency is crucial to maintain trust.

Best practices for successful data migrations

Having been in the trenches of numerous data migrations, bearing the battle scars to prove it, I’ve gleaned invaluable insights from each challenge faced. Here’s a distillation of what I’ve learned, my tried-and-true best practices:

- Planning is everything

- Prioritize data quality

- Choose tools and frameworks that can handle not just your current data needs, but also anticipated future growth

- Beyond the initial purchase or subscription cost of tools, consider potential training, maintenance, and upgrade expenses

- Test, test, test

- Migrate in phases, learn from each phase, and refine the process

- Prepare for rollbacks

- Communication is key

- Document the entire process

Ready to elevate your next data migration with unparalleled data observability?

As I’ve emphasized, data observability plays a pivotal role in ensuring a seamless transition. Reach out to our team and discover how Monte Carlo can expedite a smooth and successful migration.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage