How Compass is Reinventing Real Estate with Data Reliability in the Cloud

Compass, a $6.4b real estate unicorn, works with real estate agents, buyers, and sellers to support the entire buying and selling workflow through their real estate technology platform.

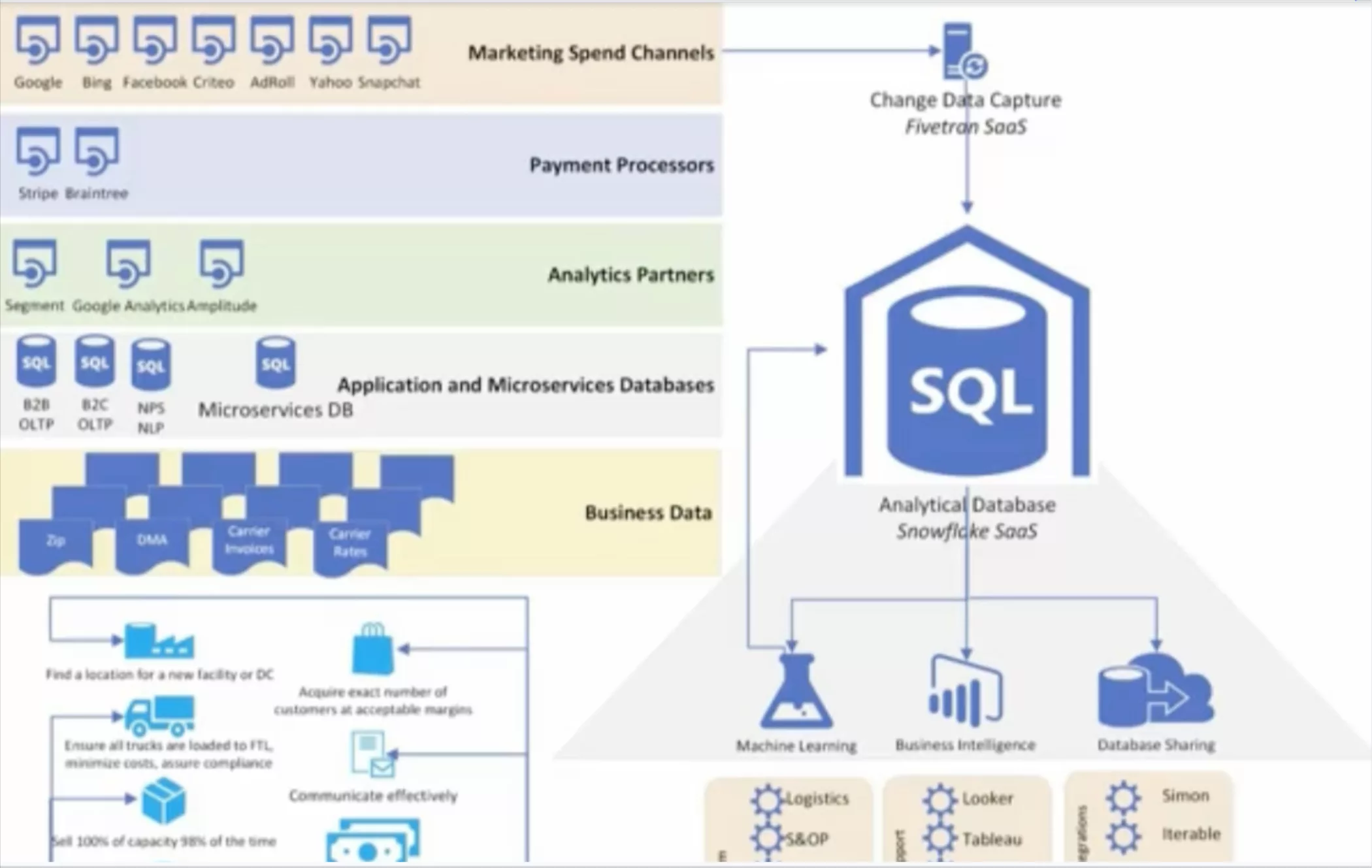

Compass uses data to help fulfill their mission “to help everyone find their place in the world” and fuel their remarkable growth by creating advanced technology products for these three unique audiences. The Compass user experience is fueled by a complex set of technologies, including a powerful mobile app, agent-facing Marketing Center, intelligent pricing tools, and a proprietary CRM.

Behind the scenes, Compass employs a data infrastructure and intelligence team of 10 people.

“The team is responsible for all the data warehousing, data sourcing, and data enablement capabilities for the rest of the company,” said Suvayan Roy, senior product manager. They use solutions such as Redshift, Snowflake, Tableau, and Looker to support their data needs.

Compass uses their vast amounts of data not only to power real estate deals through their customer-facing products, but to shape the future of the products themselves.

“Most of the data that we have is used to drive a lot of critical business choices and decisions, especially for us in the product and engineering space,” said Roy. “We aim to be a tech-driven brokerage and gain insight into how our products are doing in the market, how agents are reacting to them, what products are being used the most…and areas where we might want to invest to make our product better.”

The challenge: monitoring data quality at scale

Like other startups, ensuring data quality across complex pipelines is a top priority for Compass.

“In any startup, there are only finite resources to look at data quality and measure when data is being loaded, if the right row count is there, even just very fundamentally basic things,” said Roy. “As a fast-growing company, overall data quality is not always the first thing that we do with additional engineering headcount.”

For some critical datasets, the team built dashboards to trigger error notifications, but they relied primarily on ad-hoc manual oversight—analysts noticing when something looked off and flagging the need for an investigation.

That is, until they partnered with Monte Carlo on Data Observability. By leveraging the Monte Carlo Data Observability Platform, they could easily monitor key data features including distribution, volume, freshness, schema, and lineage at each stage of the data pipeline.

The solution: Data Observability

The Compass team decided to try out the platform as a proof of concept, and six months later, Monte Carlo continues to solve data downtime issues—and provide peace of mind. “Having a tool that lets us be able to monitor [data quality] without having to invest in building an in-house solution or other options,” said Roy.

Monte Carlo has also helped Compass increase visibility into the health of its data infrastructure.

“If something happens to our upstream production data, we know that Monte Carlo will be able to identify the root cause of this issue in the source system so that we can go back and correct it,” said Roy. “Monte Carlo ensures that we’re on top of any data fire drills the moment they arise, before they impact the business.”

Outcome: data reliability delivered

For Compass, Monte Carlo’s approach to data observability empowered them to solve data quality issues fast so they could start trusting their data to deliver reliable, actionable insights.

Among other benefits, Monte Carlo has enabled Compass to:

- Increase cost savings by reducing time to resolution of tedious data fire drills and restore trust in data for vital decision making

- Better collaborate between data engineering and data analyst teams to understand key dependencies between data assets

- Drive greater efficiency and productivity by gaining end-to-end visibility into the health, usage patterns, and relevancy of data assets

With automated data observability, Roy and his team can sleep soundly at night and continue focusing on building products, meeting customer needs, and revolutionizing real estate without having to worry about the state of their data and analytics.

Interested in learning more? Reach out to Barr and the rest of the Monte Carlo team using the form below.

Our promise: we will show you the product.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage