Snowflake Data Mesh: Ensure Reliable Data with Data Observability

There’s a lot of content out there about why a data mesh is (or isn’t) the best thing since sliced bread. But one thing’s for sure: if you can’t trust the data powering your analytics architecture, it’s hard to justify the investment.

Here’s how Snowflake and Monte Carlo are working together to help data teams realize the potential of the data mesh with end-to-end data observability.

The data mesh contains multitudes. It’s a paradigm shift, an architectural framework, a popular trend, and a source of certain misconceptions in the data engineering community.

But in the words of Zhamak Dehghani, who pioneered the term, the data mesh is actually defined as a “socio-technical shift—a new approach in how we collect, manage, and share data.”

What does that mean, exactly? Well, as opposed to the traditional model of a centralized data engineering team owning all things data, the data mesh federates ownership among domain teams. Those teams are held accountable for providing their data as products to consumers, while also facilitating communication between distributed data across different locations.

Put another way, there are four critical features of the data mesh:

Domain-driven ownership

Under the data mesh framework, data is owned by domain teams—from sourcing and ingestion to building and managing pipelines to surfacing data for other teams or consumers. This approach puts the onus on the experts closest to the business needs to maintain data quality and governance.

Self-service infrastructure

The data mesh calls for the democratization of data, or making data available for domain teams to access when needed through self-serve infrastructure—rather than relying on overworked engineering teams to handle ad-hoc requests and risking bottlenecks.

Ability to support data as a product

The data mesh framework expects domain teams to not only own and self-serve data, but to make it useful by creating data products that are accessible to other teams within the organization.

Federated governance

Even while democratizing access to and ownership of data, the data mesh architecture includes a thorough approach to governance. Each domain team defines and manages policies and controls that ensure data quality and compliance standards are met.

The data mesh has grown in popularity because it solves a lot of challenges both engineering and business teams face under the traditional model of data management. Over the last decade, centralized teams have faced growing burdens as organizations increasingly invested in data. More sources and more consumers meant more pipelines and more challenges. Specialized data engineering teams were expected to build, maintain, and troubleshoot this complex infrastructure, but without the business context of knowing exactly how the data was being used or for what purpose. Too often, this led to overworked data engineering teams and impatient downstream consumers waiting for answers to ad-hoc requests or fixes to broken pipelines.

The data mesh framework addresses these concerns by putting ownership and accountability back onto domain teams. For many forward-thinking organizations, this approach is a welcome change with a few critical benefits, including simplifying access to data, allowing for greater transparency, and improving the speed of data analytics.

All that said, some leaders are hesitant to embrace the data mesh because of one concern: ensuring data quality. Federated governance and domain-driven quality control can be unfamiliar practices that raise concerns around standardization, compliance, and maintaining trust in data.

While the data mesh represents a sociological shift as well as a technical one, the right tools can help address these concerns. In this article, we’ll share how some of the best data teams are designing their data meshes with Snowflake’s Data Cloud and Monte Carlo for end-to-end data observability.

The technical foundation of the data mesh: the cloud warehouse

As a distributed architecture, the building blocks of any data mesh is a cloud-native data warehouse or lake. Data must be stored and transformed in a platform designed to support both centralized standards and decentralized ownership of data—and Snowflake fits the bill.

Empowering domain-driven ownership

The fully managed central Snowflake platform allows domain teams to focus on data ownership and products while removing the need for provisioning, maintenance, upgrades, or downtimes. Domain teams can operate as distinct units and scale to the right number of users working with on-demand access to any amount of data.

Facilitating self-serve infrastructure

Additionally, Snowflake gives domain teams a one-stop-shop to access, process, prepare, and analyze data across its lifecycle. The Snowflake elastic performance engine allows teams to work in their language of choice (SQL, Java, Python, or a mix) as they power pipelines, reporting, applications, or exploration.

How companies are using Snowflake data meshes to enable their data pipelines

For example, global healthcare company Roche uses Snowflake to centralize infrastructure management while empowering domain teams responsible for data ownership and management across its lifecycle.

Similarly, tech-driven insurance provider Clearcover re-centered its data stack on Snowflake to reduce the bottlenecks that would occur when a single data engineering team was responsible for serving the company’s data needs.

And at Berlin-based Kolibri Games, replacing a SQL database with Snowflake helped lay the foundation for a shift to domain-specific data ownership and empowering internal teams to self-serve access to key insights necessary to inform product decisions.

The missing layer: federated governance

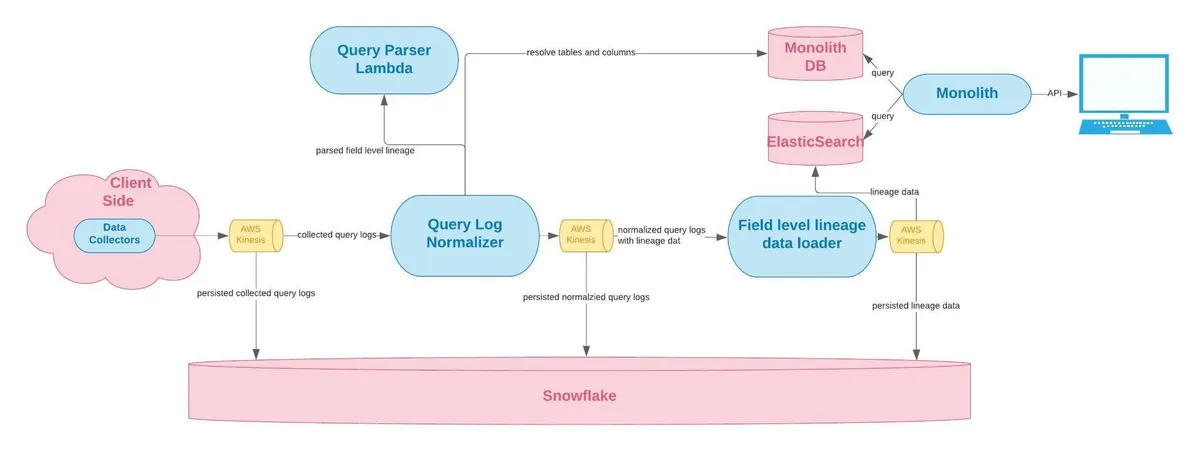

So, what about data quality? As we mentioned, the data mesh architecture mandates a universal layer of governance that ensures there is agreement around data quality, data discovery, and data product schema. This layer should include encryption for data at rest and in motion, data production lineage, data product monitoring, alerting, and logging, and data product quality metrics—no small feat.

This is where data observability comes into play. While a centralized platform like Snowflake can provide the ability to define and apply certain governance policies at the data and role level, a robust observability platform is needed to achieve the full scope of federated governance under the data mesh framework.

With a data observability platform, teams can automate monitoring and alerting across the entire data lifecycle, enabling them to proactively respond to incidents of data downtime. And since the tooling can be applied across the entire organization and data stack, it supports the standardization of data quality while leaving space for domain teams to set their own data SLAs and SLOs based on the business requirements of different assets and products.

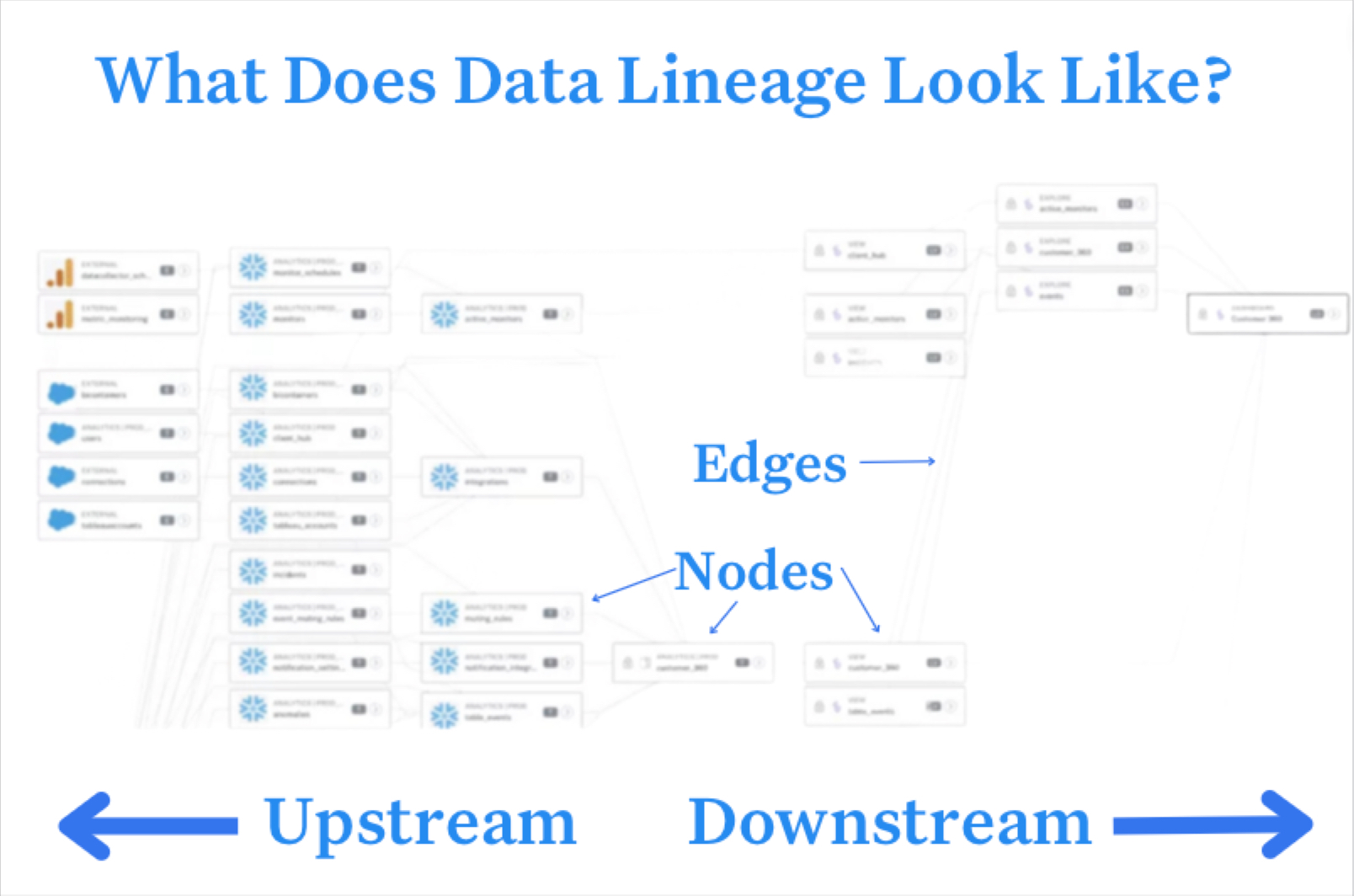

Additionally, data observability includes end-to-end lineage that helps domain teams understand the downstream impact of data issues and empowers better data discovery for teams across the organization. Data observability platforms can also help teams manage data via domains, which allow teams to partition data based on use case and access patterns.

Powerful data observability solutions like Monte Carlo make it easier for teams like Roche, Clearcover, and Kolibri Games to achieve a data mesh architecture by supporting federated governance and universal operability. (This checklist helps identify what the right data observability solution will provide.)

Disclaimer: a data mesh is NOT defined by technology alone

But as useful as platforms like Snowflake and Monte Carlo can be, technology alone does not equal a data mesh architecture. These tools can be helpful as organizations build their supporting infrastructure, but the right teams, processes, and policies have to be in place to put the technology to use.

For Zhamak’s definition of “a socio-technical shift” to come to fruition, cultural buy-in and support from leadership is essential.

“All the data-driven initiatives and data platform investments are so highly visible and so highly political in organizations, especially large organizations, that there has to be top-down support and top-down evangelism,” Zhamak described in a recent conversation with our founder Barr Moses.

Additionally, Zhamak says, there must be bottom-up adoption and advocacy from the actual teams expected to take on ownership of their data products. “If the domains aren’t on board, all we’re doing is overengineering the distribution of data among a centralized team.

So the data mesh is not a technical solution or even a subset of technologies. It’s an organizational paradigm for how companies manage and operationalize data.

But the data mesh does require a specific kind of infrastructure to succeed, and just like any data infrastructure, having the right combination of open-source or SaaS tools will ensure scalability, speed, and reliability as you journey towards adopting the data mesh framework.

Want to learn more about how to build a data mesh-ready infrastructure in your organization? Reach out to Matt and the rest of our team for more information about how Snowflake and Monte Carlo work together to deliver the technical foundation and essential layer of governance you’ll need.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage