How Vox Media Built a Post-Merger Data Stack with BigQuery, dbt, and Monte Carlo

When two data-driven media companies join forces, two data teams, two data stacks, and two data strategies have to become one. That’s no easy task.

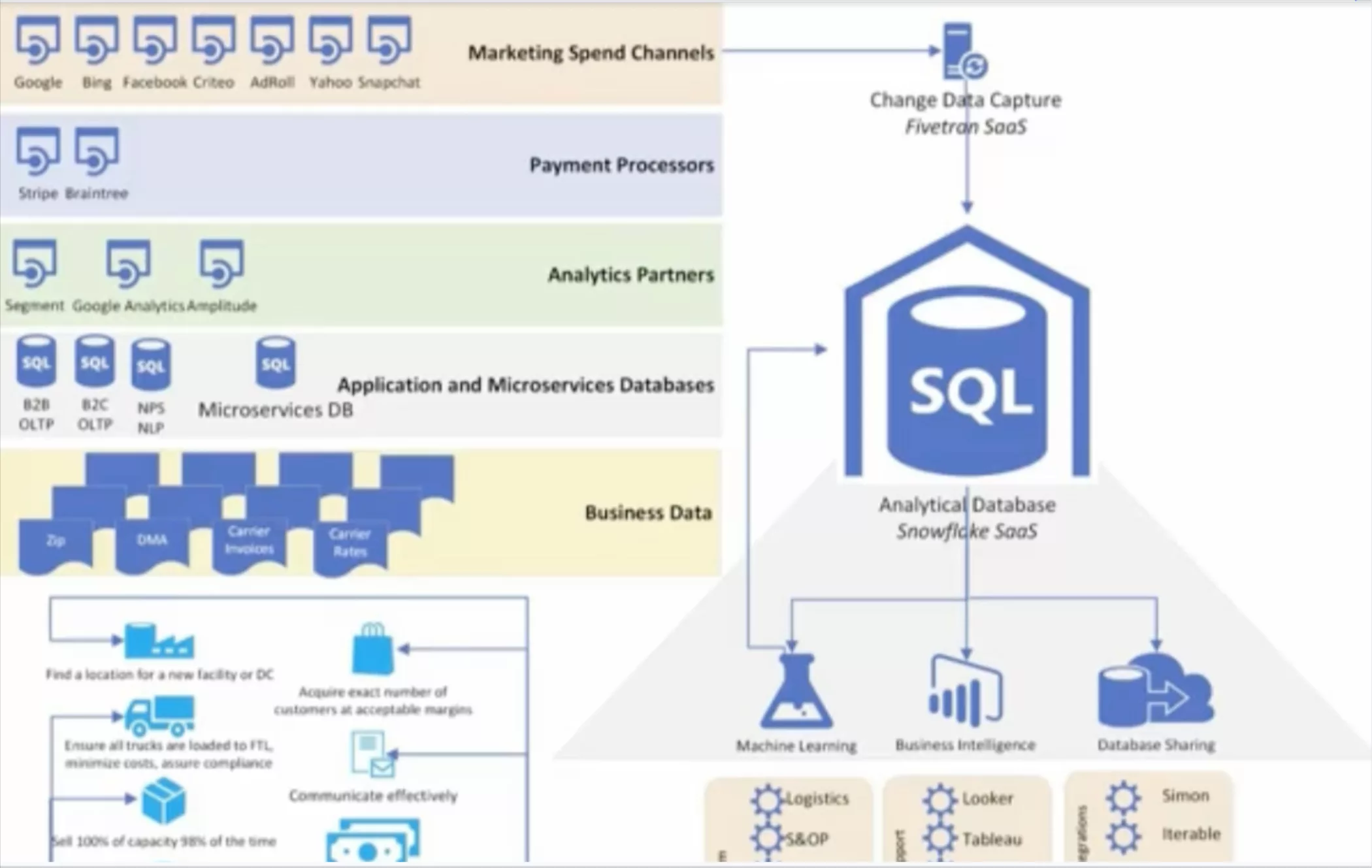

And it’s exactly what happened in 2022 when Vox Media (home to editorial properties like Vox, New York Magazine, and The Verge, along with a podcast network and a nonfiction video production and distribution studio) acquired Group Nine Media. The merger gave Vox Media access to a vast amount of data from the social media platforms where Group Nine found immense popularity with its own content brands, like The Dodo, NowThis, and PopSugar.

Today, the bigger and better Vox Media uses data to understand its audience’s interests—how they respond to and engage with Vox-produced content of all types, across many channels. That data informs editorial strategy and powers Vox’s ad targeting platforms.

But how did the data teams get there? Recently, we sat down with Vanna Trieu, then Engineering Manager of Data Products at Group Nine and now Senior Product Manager at Vox Media, to learn from his experience. Vanna helped the two organizations merge data stacks and data teams—while improving data reliability and trust across the board.

The challenge: merging and scaling tools, teams, and strategies

As Vox Media absorbed the Group Nine data ecosystem, Vanna and his team needed to find a way to understand the lay of the data land. The two companies needed to centralize a single source of truth about data, and land on a data stack that would serve the newly merged data teams.

The Group Nine data stack was mostly built in-house, rather than architected from off-the-shelf products.

“This is no shade on how Group Nine set up our data stack in the past,” Vanna said, “But we used a sledgehammer to do jobs where a regular hammer would have done perfectly fine.”

And the team was spending a lot of time on manual processes that weren’t scalable. The merger with Vox Media was an opportunity for Vanna and his team to modernize their approach. “There are frameworks and tools out there that can ably do these jobs without having us write bespoke scripts every time to move a source system into our warehouse.”

The challenge: inability to scale manual testing

Data quality was an important area where the team had room to improve. They were writing manual tests for data quality checks, with spotty coverage and an overall lack of visibility into the data ecosystem. It could take days to resolve data quality issues, and without reliable insights into marketing data specifically, it was difficult to power ad spending effectively.

“We needed to not just know when systems break—we also needed to understand what the impact is on the underlying data,” said Vanna. “And ultimately, to understand how it impacts our data consumers across the company.

As the post-merger data stack took shape, it became clear that data observability would play an important role in bringing more visibility to the company’s data landscape.

The solution: end-to-end data observability

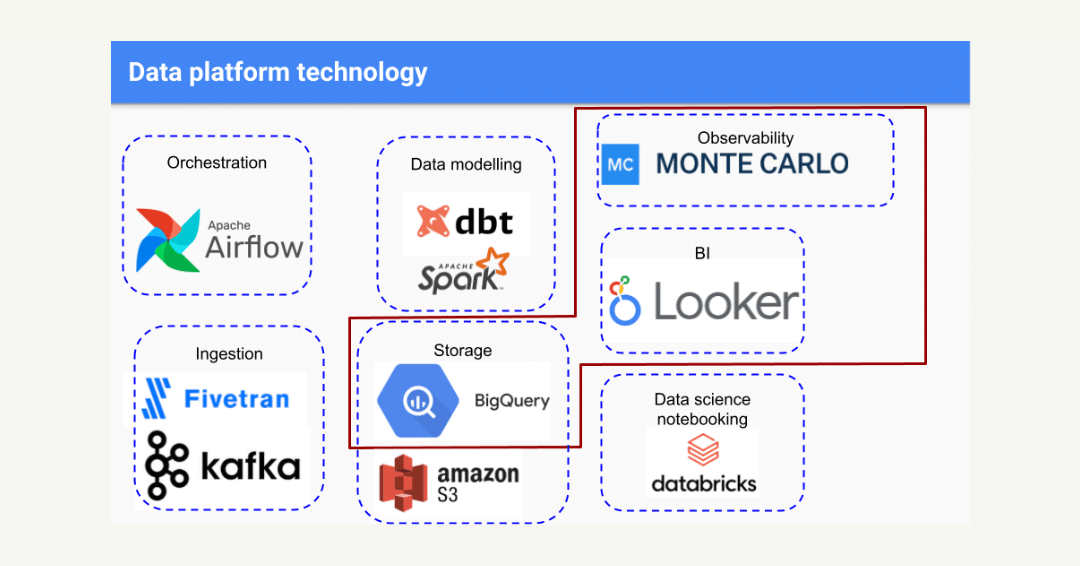

Today, the Vox Media data platform includes Fivetran, Meltano, Airbyte for ingestion, BigQuery for warehousing, dbt for transformation, and Monte Carlo for end-to-end data observability.

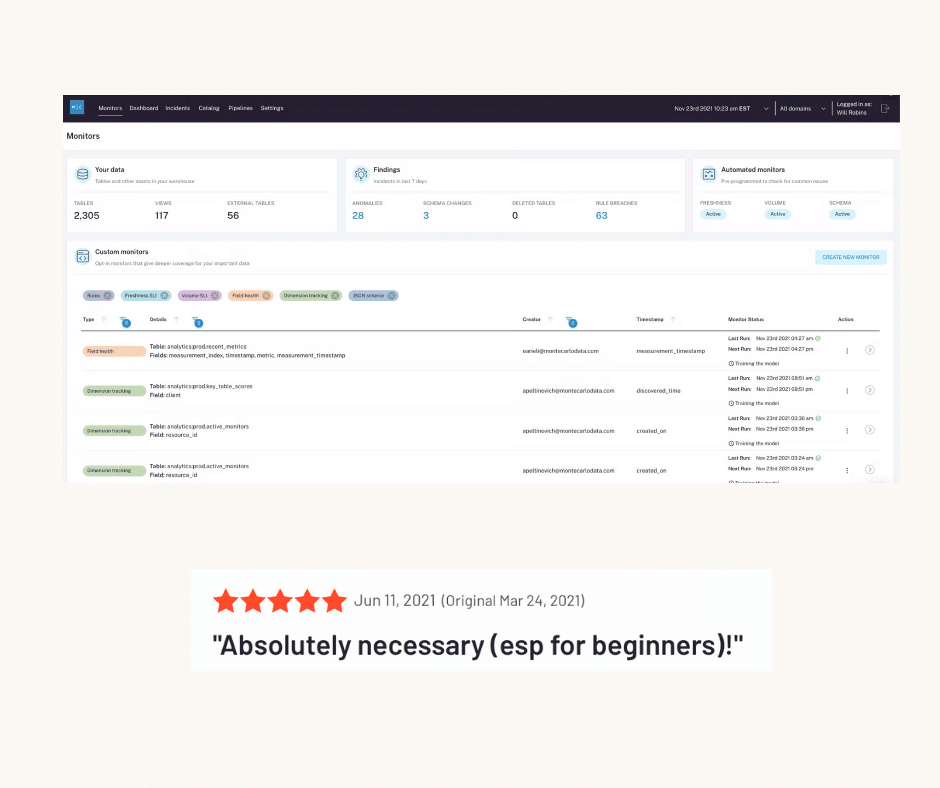

With automated monitoring and alerting, Monte Carlo helps the data team at Vox be the first to know when issues like schema changes or pipeline delays cause data to break. In many cases, they’re able to fix issues quickly, before the downstream customers are affected.

And, more importantly to Vanna, the data lineage provided by Monte Carlo explains why those outages occur.

“Knowing that a DAG broke or that a dbt run failed doesn’t speak to what actually has occurred in the underlying data structures,” said Vanna. “What does that actually mean? How does this impact the data? How does this impact your users? Does this mean that the numbers will look funky in a dashboard or a report that they’re accessing in Looker?”

Data observability gave Vanna and his team the visibility and improved troubleshooting they needed to answer these burning questions. “We at Vox Media believe that having data observability directly contributes to our understanding of data quality,” said Vanna. “And we are really happy that Monte Carlo was able to provide that for us.”

Outcome: More reliable insights to power business decisions

Automated monitoring and end-to-end lineage help the data team resolve key issues quickly. And by building custom checks for business-critical assets, Vox Media keeps valuable insights flowing to marketing and editorial teams who rely on them to make vital business decisions.

“We collect daily engagement metrics on how our videos are performing, like views, likes, comments, and reactions,” said Vanna. “And we want to know if the metrics aren’t being collected, or if there are any sort of statistical anomalies that we should be checking for. So we have a custom check within Monte Carlo that meets the criteria we’ve set for the videos and our expectations for video performance.”

Outcome: Automated issue detection and faster resolution

Vanna estimates that without observability tooling, it would take 1-2 full-time data engineers to handle data quality issue detection and resolution at Vox Media. With Monte Carlo, his team saves time and money.

And, since they aren’t spending valuable time firefighting data issues or building custom anomaly detection algorithms, Vox Media’s data specialists can focus on generating better insights and innovating new ways to harness and use audience data.

Outcome: Making it possible to explore self-serve data

Now that data quality has become more trustworthy and reliable, Vox Media is looking to make it more accessible and introduce self-service data products to more users across the organization. “We want to enable insights across the business, and across all levels,” said Vanna. “So we’re looking to reconcile data wherever it sits—in a warehouse, our data lake, or another external warehouse (based on Vox Media’s trend of acquiring companies).”

At every stage, Monte Carlo will help ensure that data is accurate, up-to-date, and reliable. If you’d like to learn more about instilling confidence in the data that flows throughout your own stack, contact the Monte Carlo team to schedule a demo of our data observability platform.

Our promise: we will show you the product.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage