Barr Moses: My Top 5 Articles of 2022

I don’t see myself as a writer or blogger. In fact, the first blog post I published on Medium sat as a draft for months. (Data downtime, anyone?)

Prior to launching Monte Carlo, I interviewed hundreds of data leaders. I gained so much insight into their hopes, dreams, and fears that the impulse to share finally exceeded the anxiety of publishing. And there was no turning back.

I still talk to data leaders every day–it’s an immutable component of my schedule (not to mention my favorite part of my job!), and those conversations often serve as inspiration for new articles. In fact, their perspectives, experience, and encouragement are the reasons Monte Carlo and the data observability category exist today. So, thank you to our customers, partners, and the broader data community for pioneering the discourse around what it means to have reliable data.

Here’s a few of my favorite articles this year, in no particular order. Enjoy!

Table of Contents

- What’s Next for Data Engineering in 2023? 10 Predictions

- Is “Self-Service” Data’s Biggest Lie?

- How To Set KPIs For Your Data Team

- I Don’t Care How Big Your Data Is

- You Have More Data Quality Issues Than You Think

- The common thread: pay attention or pay the consequences

What’s Next for Data Engineering in 2023? 10 Predictions

I decided to try my hand at the prediction game after my friend and famed VC, Tomasz Tungus, shared his 9 predictions at our IMPACT conference. Our combined predictions for 2023 are:

- Cloud Manages 75% Of All Data Workloads by 2024 (Tomasz)

- Data Engineering Teams Will Spend 30% More Time On FinOps / Data Cloud Cost Optimization (Barr)

- Data Workloads Segment By Use (Tomasz)

- More Specialization Across the Data Team (Barr)

- Metrics Layers Unify Data Architectures (Tomasz)

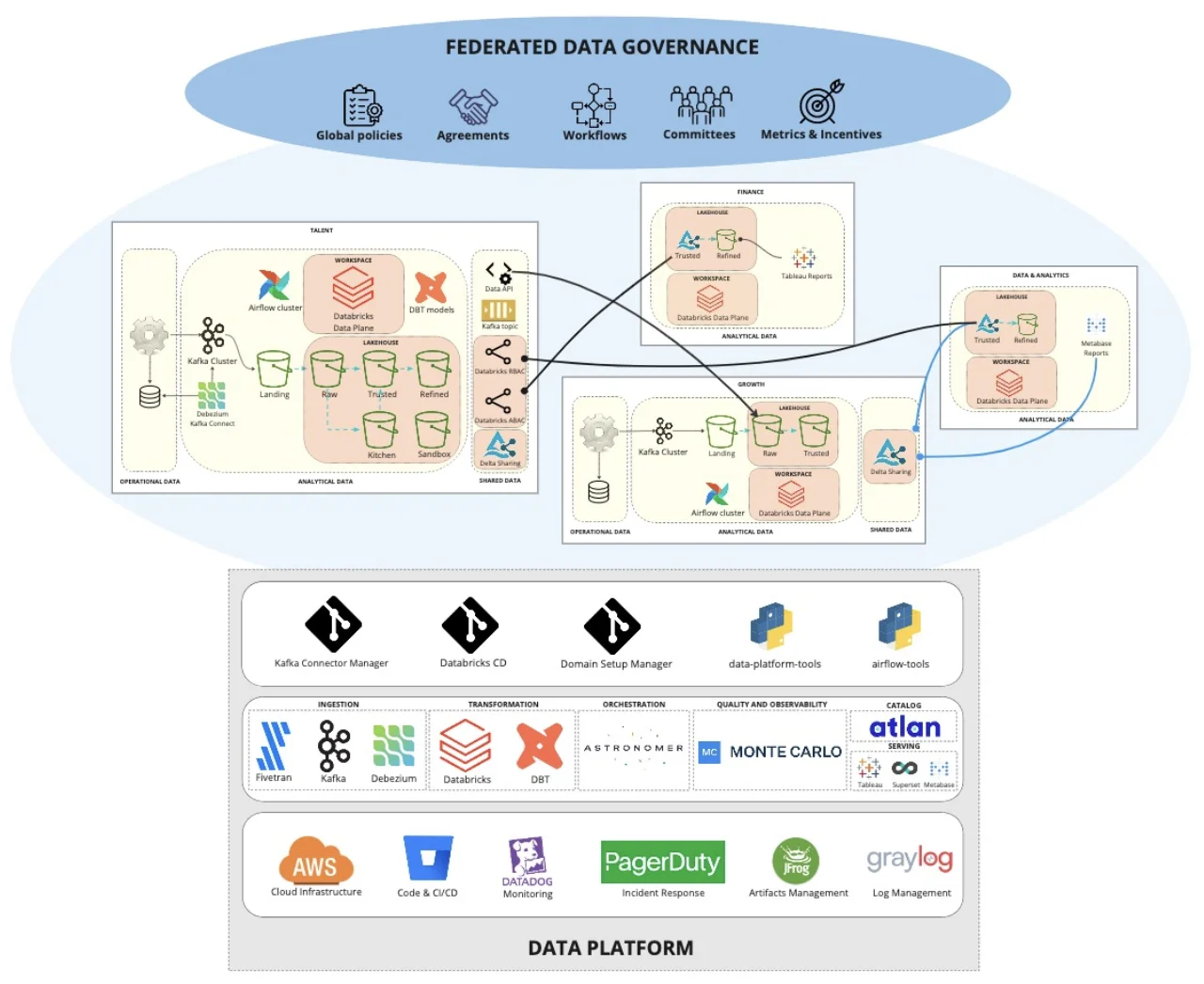

- Data Gets Meshier, But Central Data Platforms Remain (Barr)

- Notebooks Win 20% of Excel Users With Data Apps (Tomasz)

- Most machine learning models (>51%) will successfully make it to production (Barr)

- “Cloud-Prem” Becomes The Norm (Tomasz)

- Data contracts move to early stage adoption (Barr)

Is “Self-Service” Data’s Biggest Lie?

Self-service data is a fundamental principle of the data mesh and universally accepted as a worthwhile, if hard to reach goal for data teams. But should it be?

We were inspired by a debate at one of our industry events, which highlighted that this question is not quite so cut and dry.

Both sides of the case are argued in a mock courtroom before an ultimate ruling is made that while self-service data is a worthwhile endeavor, data teams must understand reality dictates processes be created to accommodate the inevitable ad-hoc request.

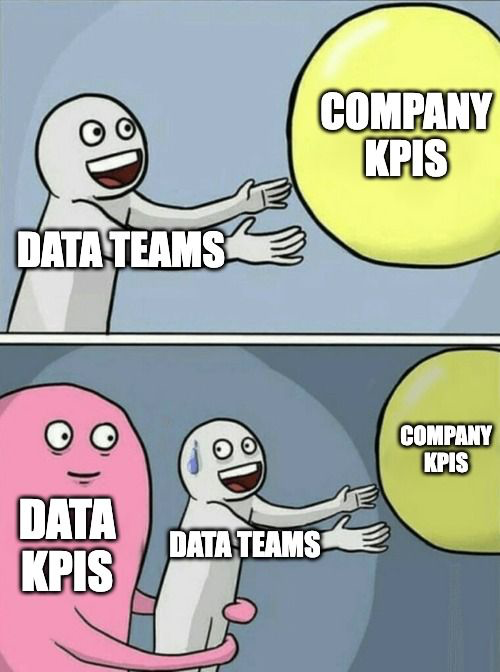

How To Set KPIs For Your Data Team

There is a certain irony in the challenge of setting robust KPIs for the data team.

But obsessing over the ideal measurement tactic isn’t the point. The important thing is to start working with your data in a formalized way towards a concrete goal.

The best steps for getting started are:

- Understand what your customers really want and need

- Make a plan, but revisit it often

- Prioritize KPIs based on your business goals

- Set goals based on solving problems, not adopting new technologies

- Dedicate time to freeform exploration

- Enable effective knowledge transfers

I Don’t Care How Big Your Data Is

There is a phenomenon in the data community where the size of our data somehow became inextricably linked to our ego.

For the vast majority of organizations, the reality is sheer size doesn’t matter. At the end of the day, managing data is all about building the stack (and collecting the data) that’s right for your company – and there’s no one-size-fits all solution.

So at the next data conference, instead of asking, ”how big is your stack?” Try questions focusing on the quality or use rather than the collection of data…or drive a really big car.

You Have More Data Quality Issues Than You Think

Most data leaders understand they have a data quality problem when they get a pile of angry emails in their inbox. Unfortunately, at that point the horse has already left the barn.

We dug into the data from our platform to help data leaders get a sense of how many data quality issues may be going uncaught (1 issue a year for every 15 tables in your environment).

We then discuss 8 factors that cause data leaders to routinely underestimate the number of data quality issues they have.

The common thread: pay attention or pay the consequences

Looking back, the theme across these articles is that data reliability isn’t something you can ignore. The best data leaders will be proactive to mitigate their risks, because the rising cost of data downtime far exceeds the cost of improving data quality – and most importantly, stakeholder trust.

##

Interested in hearing more about our perspective on the pain points impacting today’s leading data teams? Reach out to Barr, or schedule time to chat, below:

Our promise: we will show you the product.

Product demo.

Product demo.  What is data observability?

What is data observability?  What is a data mesh--and how not to mesh it up

What is a data mesh--and how not to mesh it up  The ULTIMATE Guide To Data Lineage

The ULTIMATE Guide To Data Lineage